Tensorflow 2.0.0 gpu Performance Issue on RTX 2060, win 10 · Issue #27819 · tensorflow/tensorflow · GitHub

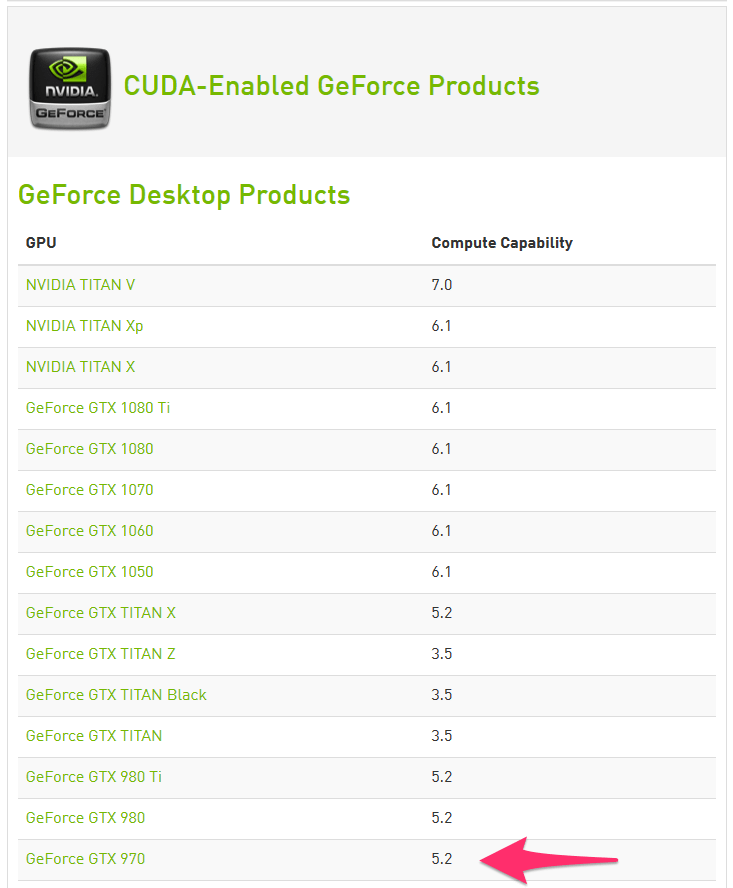

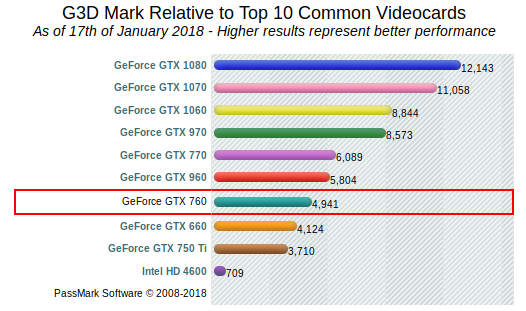

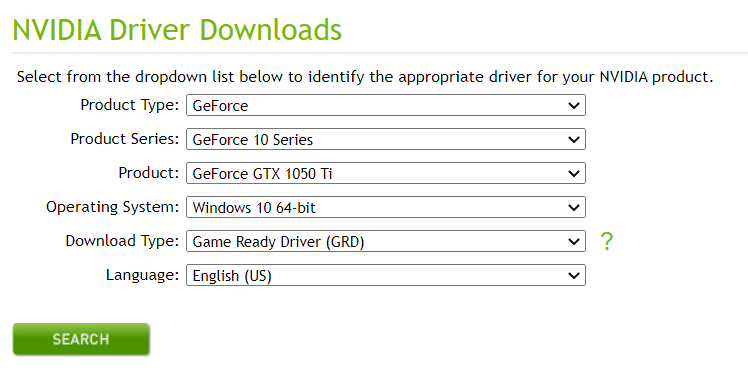

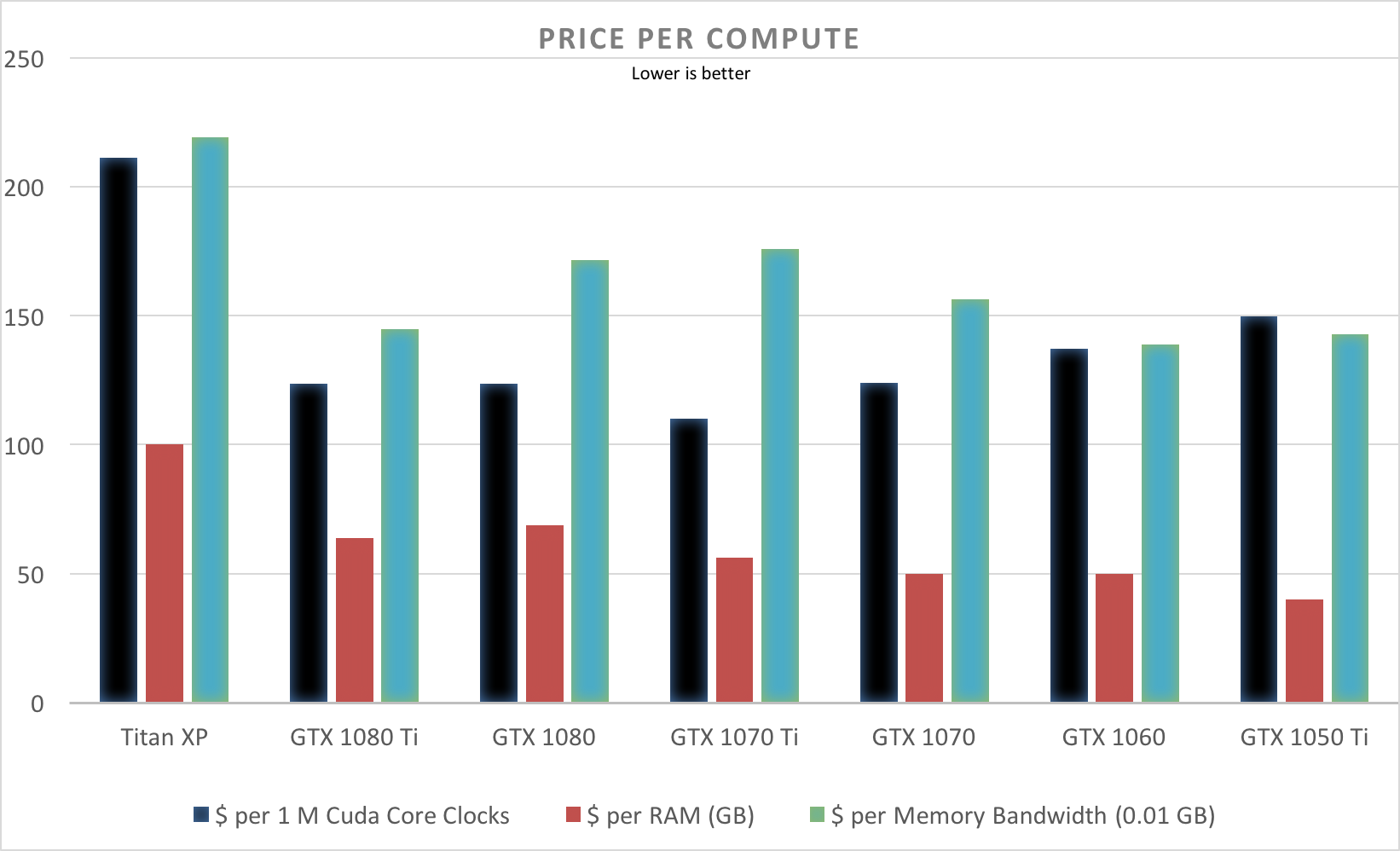

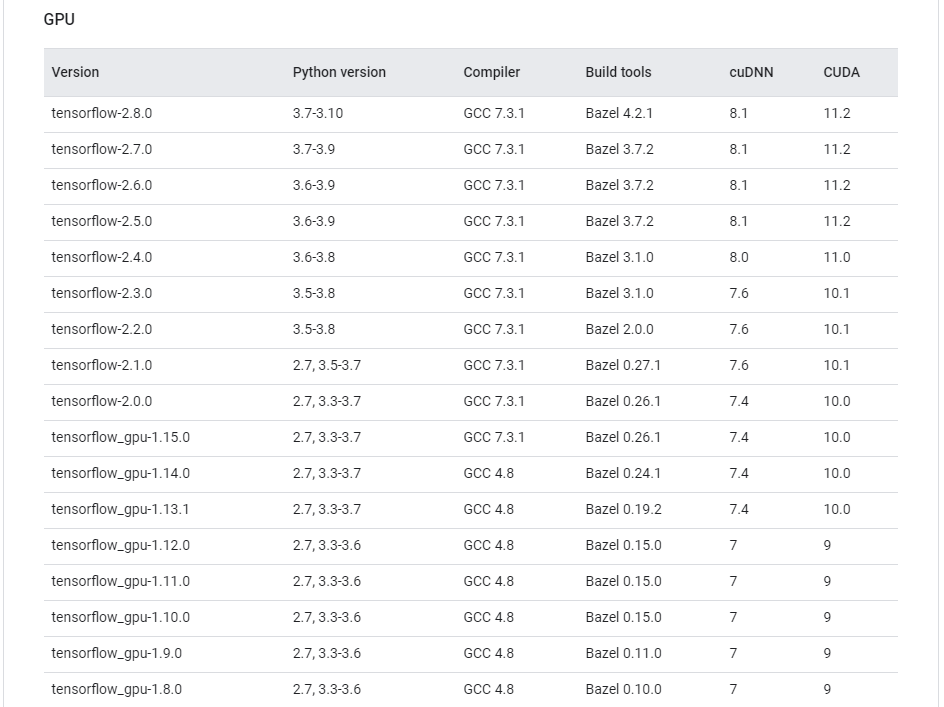

Installing TensorFlow, CUDA, cuDNN with Anaconda for GeForce GTX 1050 Ti | by Shaikh Muhammad | Medium

Installing TensorFlow, CUDA, cuDNN with Anaconda for GeForce GTX 1050 Ti | by Shaikh Muhammad | Medium

How Good is RTX 3060 for ML AI Deep Learning Tasks and Comparison With GTX 1050 Ti and i7 10700F CPU - YouTube

They say that AMD does not support machine learning...Today I got TensorFlow up and running on my 6900 XT (using tensorflow-directml) and started fine-tuning GPT2. Pictured below: what my gpu looks like. :